Pagination may seem like an implementation detail, but in systems with millions of records, it becomes a critical architectural decision. In APIs handling large data volumes, the pagination strategy directly impacts performance, consistency, computational cost, and scalability.

In this article, I analyze three common approaches:

- Page Number Pagination

- Offset Pagination

- Cursor Pagination (Keyset Pagination)

The tested scenario:

- Java + Spring Boot API (language and framework are irrelevant for this analysis)

- PostgreSQL

- Table with 10 million records

- Queries ordered by

updated_at(timestamp),id - Composite index

(updated_at, id) - Incremental resync operations between systems, returning hundreds of thousands of paginated records

I included a benchmark using EXPLAIN ANALYZE for deeper inspection.

Let’s begin.

Page Number Pagination

What it is

The client sends:

GET /resources?page=5&size=100

Internally, the backend converts it to:

SELECT *

FROM resource

ORDER BY updated_at

LIMIT 100 OFFSET 400;

That is: offset = (page - 1) * size

Complexity, Performance and Cost for PostgreSQL

Even with an index on updated_at:

CREATE INDEX idx_resource_updated_at ON resource(updated_at);

PostgreSQL must:

- Traverse N index entries

- Discard the first records according to

OFFSET - Return the next records within the

LIMIT

If you request: page=5000&size=100, which results in Offset=499900. The database must traverse ~500k records to return 100.

Approximate complexity

- Time ≈ O(n) relative to the offset

- The larger the page number, the worse the performance

With 10 million records:

- Deep pages become extremely slow

- CPU and I/O usage may increase significantly

But when does it still make sense?

✔ User interfaces ✔ Administrative dashboards ✔ Small or mostly static datasets

In the following scenarios, it is recommended to avoid this approach:

❌ Large-scale resync ❌ Massive exports ❌ Continuous streams

Offset Pagination (Explicit)

In practice, this is the same as page number pagination, but the API exposes:

GET /resources?offset=400000&limit=100

SQL

SELECT *

FROM resource

ORDER BY updated_at

LIMIT 100 OFFSET 400000;

Real difference?

From the database perspective: none.

The difference exists only at the API contract level.

This approach presents the same problems as the previous one:

- Large OFFSET → large scan

- Linearly increasing cost

Shift Problems

An additional issue with the previous two approaches is caused by possible ordering shifts.

Imagine the ordering is based on updated_at and you fetch two pages of 10 items each, totaling 20 items.

You fetch the first page with items 1 through 10. Before fetching the second page, item 8 (or any item from the first page) is updated.

Item 8 has its updated_at changed to a more recent value, moving it to the end of the, now position 20.

The problem is that item 11, which should have been the first item of the second page, is now shifted to position 10. When you fetch the second page, the original item 11 is not there, because it was moved to the previous page.

In this case, you will never fetch the original item 11 (now item 10).

This has the potential to generate severe inconsistencies in resync operations.

Cursor Pagination (Keyset Pagination)

What it is

Instead of skipping N records, you use the last ordering value as the continuation point.

Example:

GET /resources?cursor=2025-02-01T10:15:33Z&limit=100

SQL

SELECT *

FROM resource

WHERE updated_at > :cursor

ORDER BY updated_at ASC

LIMIT 100;

However, there is a hidden issue with this approach: if ordering is based only on updated_at, there is a risk that items updated within the same microsecond (for example, during bulk updates) may be unintentionally skipped.

Some strategies to avoid this problem:

Use composite ordering, including a field such as ID:

Recommended index for optimal performance:

CREATE INDEX idx_resource_updated_at_id

ON resource(updated_at, id);

SELECT *

FROM resource

WHERE (updated_at, id) > (:updated_at, :id)

ORDER BY updated_at, id

LIMIT 100;

In this scenario, two fields are required to generate a composite cursor (:updated_at, :id).

If you prefer to hide this complexity from the API consumer, you can encapsulate both values into a single token using Base64 or even wrap them in a UUID.

Another strategy would be to replace the simple updated_at timestamp with an update_id (update identifier) field of type ULID. This type of field maintains a sortable temporal component while resolving conflicts with a random component. However, this approach is only valid if the ULID is generated on every update, semantically replacing updated_at as the ordering criterion.

Performance aspects of the Cursor approach

With a proper index:

- Index range scan

- Complexity ≈ O(log n) to locate the starting point

- Then only sequential index reads

Performance remains practically constant for deep pagination, since the dominant cost becomes O(log n), which grows very slowly.

Cursor and Resync Between Systems

This is the ideal scenario for cursor-based pagination.

Typical flow:

System B stores the last

(updated_at, id)Calls the API:

GET /resources?cursor=lastSeenProcesses the batch

Updates the checkpoint

Continues

This guarantees:

✔ No skipped records ✔ No duplicated records ✔ Scales to millions ✔ Works with mutable data

Consistency and Concurrency

Offset

Problem:

Page 1 → records 1–100

Page 2 → records 101–200

If a record between 1–100 is updated and moved in the sort order:

- It may reappear

- It may create gaps

Cursor

Cursor-based query:

WHERE updated_at > last_seen

It does not depend on position.

It depends only on the ordered value.

Much more robust.

I/O and Memory Impact

Large OFFSET

- Large index traversal

- Massive discard of rows

- Increased buffer usage

- Potential read amplification

Cursor

- Pure range scan

- Minimal reads

- Better cache usage

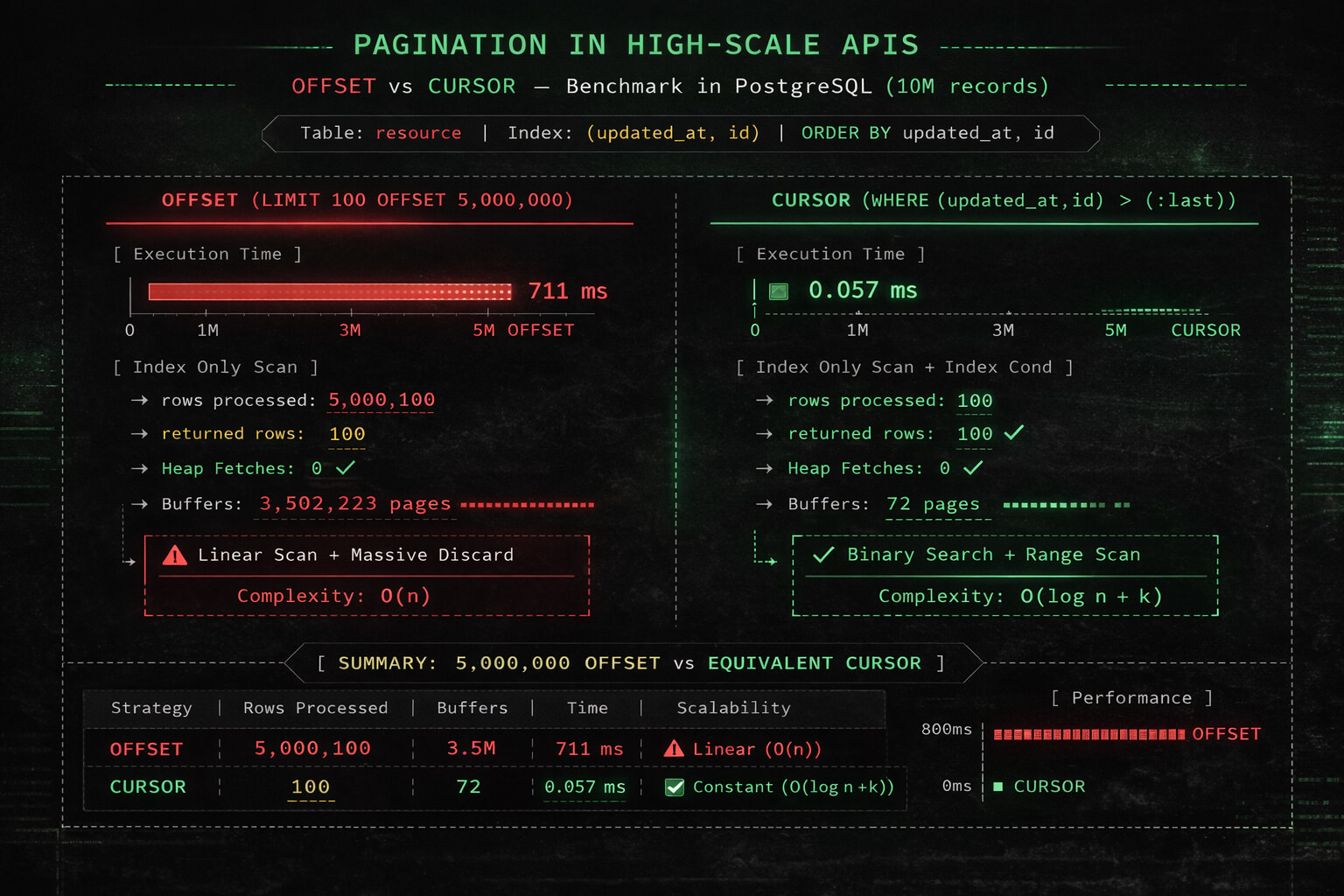

Practical Test

The OFFSET Problem

EXPLAIN (ANALYZE, BUFFERS)

SELECT id, updated_at

FROM resource

ORDER BY updated_at, id

LIMIT 100 OFFSET 5000000;

Limit

-> Index Only Scan using idx_resource_updated_at_id

rows=5000100

Heap Fetches: 0

Buffers: shared hit=3502223

Execution Time: 711 ms

What actually happened?

Even with:

- Perfect index

- Index-only scan

- No heap fetch

- Data already cached

The database had to:

- Traverse 5,000,100 records

- Discard 5,000,000

- Return only 100

Buffers accessed: 3,502,223 pages

This means millions of internal index structure accesses.

OFFSET is position-based

When executing:

SELECT id, updated_at

FROM resource

ORDER BY updated_at, id

LIMIT 100 OFFSET 5000000;

PostgreSQL must:

- Traverse the ordered index

- Advance through 5,000,000 entries

- Discard them

- Return the next 100

In other words, the database processed millions of entries to deliver only 100 records.

If OFFSET doubles, execution time roughly doubles.

Even in the best possible scenario (index-only, no disk I/O), the cost remains high.

Cursor (Keyset Pagination)

EXPLAIN (ANALYZE, BUFFERS)

SELECT id, updated_at

FROM resource

WHERE (updated_at, id) > ('2025-09-02 11:34:49.908717', 3736661)

ORDER BY updated_at, id

LIMIT 100;

Limit

-> Index Only Scan using idx_resource_updated_at_id

Index Cond: (ROW(updated_at, id) > (...))

Heap Fetches: 0

Buffers: shared hit=71 read=1

Execution Time: 0.057 ms

What happened here?

PostgreSQL:

- Navigated the B-tree to locate the correct leaf (cost O(log n))

- Located the exact starting point

- Read only 100 records

Buffers accessed: 72 pages

| Strategy | Buffers |

|---|---|

| OFFSET 5M | 3.502.223 |

| Cursor | 72 |

Difference: ~48,000x fewer pages accessed

Cursor uses an indexed range scan: T(n) ≈ O(log n + k) Where:

- n = table size

- k = page size

Execution time remains practically constant relative to pagination depth, even with 10 million records.

Final Comparison

| Criteria | OFFSET | Cursor |

|---|---|---|

| Complexity | O(n) | O(log n + k) |

| Buffers (5M) | 3.5M | 72 |

| Execution Time | 711 ms | 0.057 ms |

| Depth Scaling | Linear | Logarithmic |

| Stable Under Mutation | No | Yes |

| Ideal for UI | Yes | Partial |

| Ideal for Resync | No | Yes |

Conclusion

In a dataset with 10 million records:

- OFFSET scans millions of entries

- Cursor starts exactly at the required point

- The difference can exceed 10,000x

- OFFSET cost is structural, not circumstantial

For systems that perform:

- Resync

- Massive exports

- Service integration

- Incremental processing

Keyset Pagination is an architectural requirement, not merely an optimization

OFFSET is not inefficient due to poor implementation; it is structurally linear because of how B-tree indexes work.

When does OFFSET still make sense?

✔ Administrative interfaces ✔ Small tables ✔ Mostly static datasets ✔ When jumping to arbitrary pages is required

But not in large-scale systems.

Empirical results make it clear:

OFFSET degrades linearly even in the best possible scenario. Cursor maintains stable performance relative to pagination depth, even with millions of records.

If you are designing an API backed by PostgreSQL and expect growth beyond a few million rows, the pagination strategy must be decided from the beginning.

That's all folks...

Artus